The Wrong Zoom Level

Why tokens are too small, files are too big, and concepts may be the real unit of intelligence

I have been thinking a lot about zoom levels.

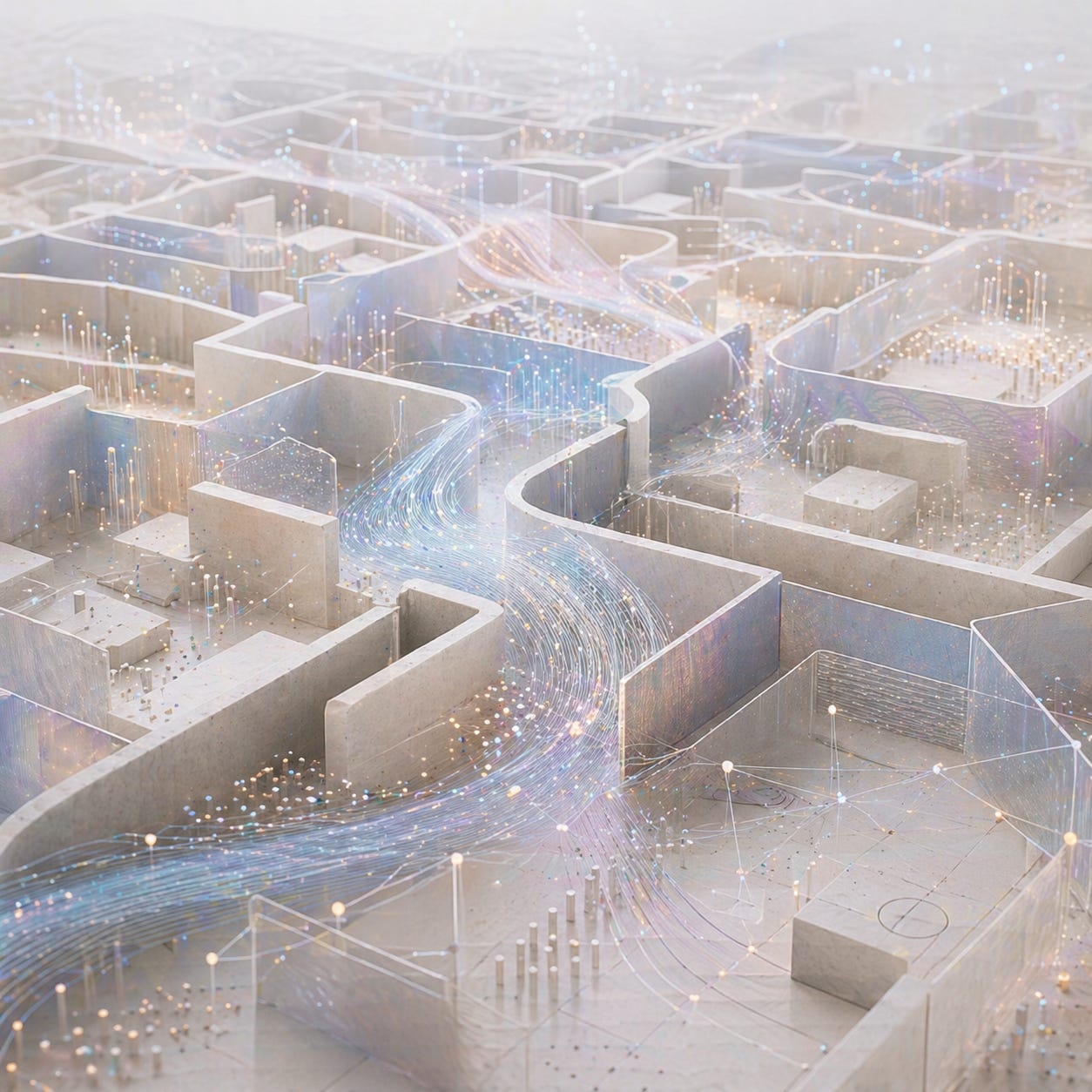

That may sound like a rather odd place to begin a paper about intelligence, software, and AI architecture. But the truth is, I increasingly suspect that many of our biggest mistakes in AI are not mistakes of ambition. They are mistakes of scale.

We are looking at the world through the wrong lens, at the wrong resolution, and then acting surprised when the answers come back blurred.

For the last few years, much of the industry has behaved as though intelligence were mostly a problem of volume. Bigger models. Bigger context windows. Bigger corpora. More retrieval. More files. More transcripts. More chat history. More everything. And I understand why that instinct has been so seductive. In life, ignorance is often solved by more information. So we carry that intuition over to machines and assume that if the model is getting things wrong, perhaps the answer is simply to give it more to chew on.

But more is not the same thing as better.

In fact, sometimes more is simply what you do before you have discovered the right abstraction.

That, I think, is the deeper story here.

When I look at the modern AI stack, I see two levels that dominate almost everything. At the bottom, there are tokens: sub-word fragments, useful for prediction, but far too small to carry stable meaning on their own. At the top, there are files: documents, PDFs, chat threads, repositories, decks, pages — all the containers in which knowledge happens to be stored. These are useful for workflow and bureaucracy, but they are usually far too coarse to serve as the natural unit of thought.

And somewhere in the middle sits the thing that actually matters.

The concept.

That is the layer I feel we can no longer ignore.

Because a token is too small to think with. A file is too big to think with. But a concept has an entirely different property: it survives its own expression.

“Authentication” is still authentication whether it shows up in a PRD, a Python service, a React flow, or a security diagram. “STEMI” is still STEMI whether it appears in a textbook, a handover note, a clinical summary, or a doctor’s verbal shorthand. The words change. The containers change. The concept remains.

That survival matters more than most current AI systems admit.

The part that fascinates me most is that these gains do not add. They multiply.

A 5x compression at one layer followed by another 5x compression at the next does not leave you 10x better off. It leaves you 25x better off. Keep stacking the right abstractions and the effect becomes extraordinary. Seven fairly modest layers at 5x each yields:

At that point, you are no longer talking about efficiency in the narrow sense. You are talking about a different order of thought altogether.

This is why the “one plus one” versus “1+1” example matters so much to me.

At first glance it looks trivial, almost childish. Of course “1+1” is shorter. Of course notation is more efficient. But that is precisely the point. It is not merely shorter. It is cognitively stronger. The compressed form becomes an atom that can compose into higher structures. It makes the next operation easier, and then the one after that. And once you begin stacking those abstractions — positional notation, operators, algebra, calculus — you do not simply get less ink on the page. You get a new kind of mind.

Mathematics did not become humanity’s most powerful intellectual technology because it learned to speak more beautifully.

It became powerful because it learned to compress reality into reusable atoms.

That pattern, the paper argues, repeats across almost everything. In software, in medicine, in law, in music, in finance, in logistics. Across the ten domains, the same structural truth keeps resurfacing: when you represent the world at the right atomic layer, the compression ratios are not marginal. They are often startling. And as the layers compose, the leverage compounds. The paper’s worked domains span single-layer compressions from around 10x to vastly higher orders of magnitude depending on the domain and layer.

So when we ask why current AI systems so often feel simultaneously magical and wasteful, I think this is a large part of the answer.

We are still making them work too hard at the wrong level.

We ask them to recover concept-level meaning from token-level fragments. We ask them to infer the thing that should have been stable from files that only accidentally contain it. We throw documents into context windows and call that memory. We call retrieval “understanding” when, more often than not, it is simply the return of a box rather than the living thing inside it.

There is a lovely line hidden inside this entire argument: warehouses are not minds.

A warehouse stores.

A mind selects.

A warehouse preserves everything.

A mind decides what matters.

That is why I increasingly think a great deal of what we call memory in AI is really just hoarding in better UX. More persistence, yes. More retention, yes. But without the right unit of compression, memory becomes clutter with excellent branding.

Human beings do not think by replaying every file they have ever encountered. We think through chunks, compressed forms, concept structures, heuristics, symbols, notations, reusable patterns. The expert physician is not scanning one hundred independent data points every time. The chess master is not processing sixty-four squares as unrelated tiles. The expert engineer is not reading a system line by line every time a decision must be made.

Expertise is compressed structure.

Or, if I put it more mathematically, expertise is a library whose description length is far smaller than the surface area of the world it can reconstruct. In the paper’s terms, a good atomic library approximates the sufficient statistics of its domain: exactly enough to reconstruct the relevant phenomenon, and nothing more. That is not just efficiency. That is understanding.

And yet the stack we have built for AI largely dodges this middle layer. Tokens below. Files above. Concepts implied, but not first-class.

That is why I think the current debate around scale is somewhat incomplete. Bigger models may still matter. Better retrieval may still matter. Longer memory may still matter. But unless we find the right unit in the middle, we will keep spending extraordinary compute to rediscover what should have been stable.

Which leads me to the question I can no longer shake:

if concepts are the right zoom level for thought, what does that mean for software itself?

Because the more one looks at code, the more it begins to resemble the same pattern all over again. We pretend software is files and lines because that is how it is stored. But what keeps recurring are not just lines. They are flows, permissions, handlers, validations, contracts, components, capabilities.

In other words: software has atoms too.

And if that is true, then generating it from scratch every time may be less a miracle than an admission that we still have not found the right library.

Wait for the next essay and the paper!